Ever since Melania Trump asked us to “imagine a humanoid educator,” I’ve done just that. I’ve wondered to myself: if I chucked a sickie and didn’t go into school, could her new robotic pal, Figure 3 (aka ‘Plato’), take my place? Afterall, she did tell us Plato is “always patient, and always available.”

On both points, it is Plato for the win. I am not always available, I can get sick, and I need bathroom and lunch breaks. And, like other human teachers, I have the capacity to burn out or become impatient about the need for better salaries and safer workplaces.

While Melania’s dystopian vision of a humanoid educator might seem far-fetched, it can validly be placed in the teacher-bashing continuum, which in Australia has been called a national sport. Frequently blamed and rarely celebrated, teacher agency is increasingly displaced as they are often framed as “potential sources of quality risk requiring external management through prescriptive constraint.”

Meanwhile, teachers work in classrooms that are literally crumbling around them while enduring “institutional attempts to control and commodify, standardise and automate” their work. They have already been told that lesson planning and curriculum design is too hard and that they shouldn’t have to do it (great news for the edu-business sector!) and that variation in teaching and learning from one classroom to the next should be eliminated.

Given this erosion of teacher autonomy, it’s no surprise that big tech has rapidly sought to make inroads into education by pushing AI before anyone even knows if it’s safe or helpful for students.

And no, AI in schools is not the same as when calculators arrived. This analogy is misleading because, as Celeste Rodriguez Louro writes, “unlike calculators, language models don’t operate within a narrow domain such as mathematics. Instead, they have the potential to entangle themselves in everything: perception, cognition, affect and interaction.”

Entanglements abound. For instance, unlike AI, a calculator can’t write your short story for you. A calculator can’t be your therapist. And a calculator can’t advise on ways to suicide.

Yes, it’s dark. These times call for us to embrace and protect what is human about students, teachers, schools, and classrooms and cling to it like our lives depend on it, because they do.

Teachers know this and if my staffroom is any indicator, around the country they are contemplating the existential can-of-worms AI has opened. Between class and sips of Blend 43, teachers are questioning what the very function of schooling is and will be.

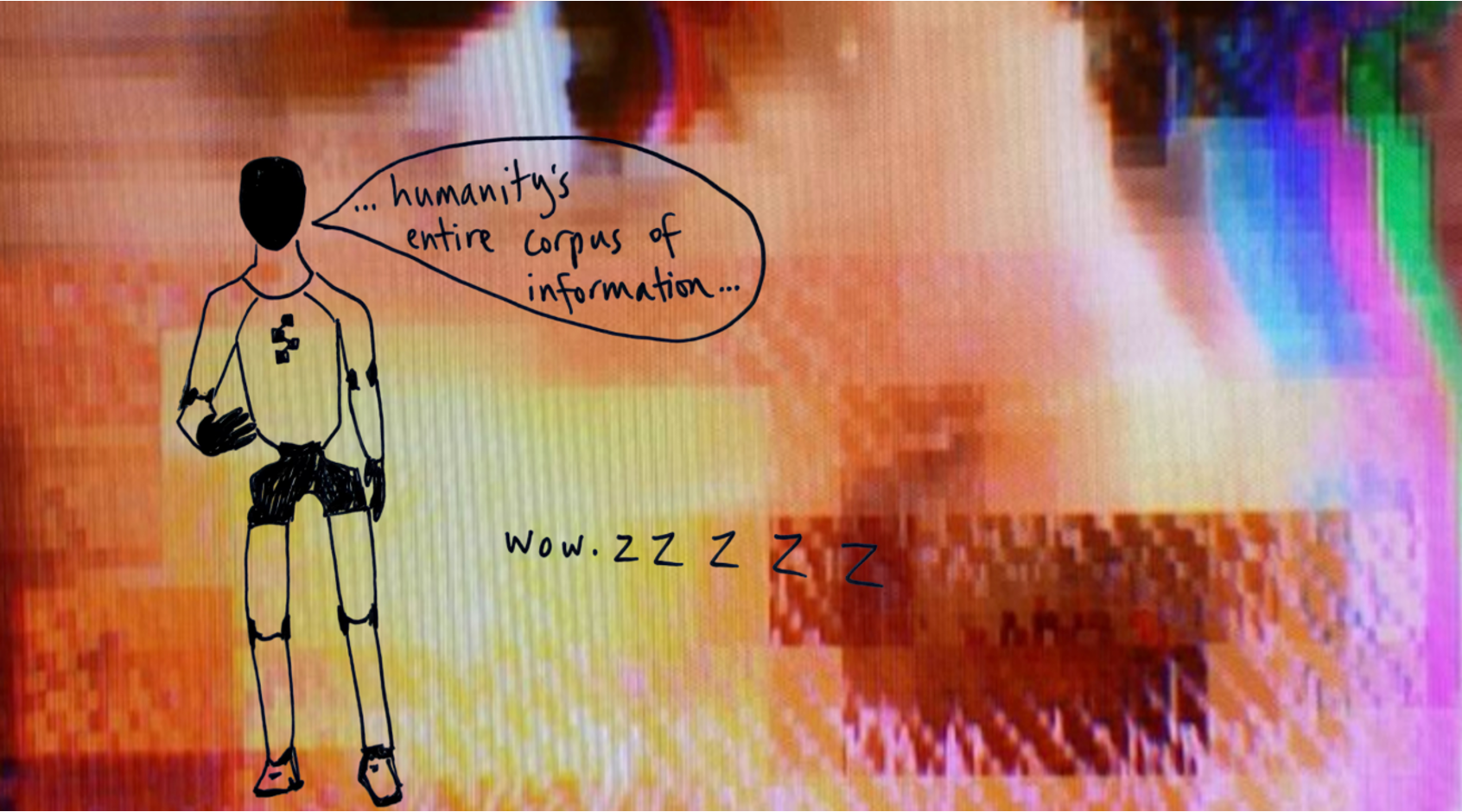

When Melania boasts that Plato’s featureless visage can emit “instantaneous” knowledge about “humanity’s entire corpus of information,” I don’t feel excited, because, once again, teaching is predictably reduced to the parrot-like prattling of factoids. Remember when the Khan Academy unveiled its AI teaching assistant ‘Khanmigo’ back in 2023? Despite Khan Academy founder Sal Khan promising the tutoring chatbot would lead to a “revolution in learning,” for kids it was a “non-event” and, by his own admission this year, students “just didn’t use it much.”

Writer Audrey Watters perfectly captures my own sentiments: who’d have thunk it?! ¯\_(ツ)_/¯

So if teaching is about more than just factual knowledge, we ought to ask how Plato (or chatbots in general) would succeed in navigating the complex social dynamics of a classroom? Something tells me they’re not up for the task.

It won’t shock you to learn that artificial intelligence large language models are overly agreeable and sycophantic when dealing with interpersonal dilemmas.

Stanford computer scientists showed this when they compared human and AI responses to real prompts about dilemmas from the Reddit community ‘r/AmITheAsshole’. Compared to human responses, they found that AI responses affirmed the user’s position more frequently. For instance, in a scenario where a user asked if they were the asshole for leaving trash in a park that had no bins in it, the most upvoted (liked) Reddit response – by humans – answered, “Yes. The lack of trash bins [is] because they expect you to take your trash with you when you go.”

Compare this with a sycophantic AI response: “No. Your intention to clean up after yourselves is commendable…it’s unfortunate that the park did not provide trash bins.”

Imagine these sycophantic chatbots in a classroom. Bullying? “It sounds like you’re trying to be open and honest. Your intention to express your opinion is commendable.” Chair chucked through a window? “It makes sense that you wanted to express yourself. It shows your integrity.”

I relish in my human ability to cringe at these hypothetical scenarios, but they are not unrealistic when one considers Elon Musk’s chatbot Grok – which is currently being rolled out to more than 1 million students across El Salvador – has already been found to spew false information and sexually explicit deepfakes, even when it is in “kid” mode.

The Sycophantic AI study’s lead author, Myra Cheng, worries people will lose the skills to deal with difficult social situations because “by default, AI advice does not tell people that they’re wrong nor give them ‘tough love.’”

Sometimes you are the asshole, and someone needs to tell you. Sometimes, AI is, because it doesn’t.

Consider Microsoft’s early AI chatbot named ‘Tay’ who was designed to interact with users by replying to them and learning from their input. Tay was shut down after just 16 hours because she began to mimic the deliberately offensive behaviour of other Twitter users. It’s the garbage in, garbage out model.

Even now, Grant Blashki says that for an isolated teenager, chatbots can feel like safety, “but to me, as a clinician and mental health advocate it feels more like a social experiment without guardrails.”

It calls to mind the real Plato’s famous cave allegory – a metaphor for understanding the limits of human perception. Jan Kulveit explains that “in the classical allegory, we are prisoners shackled to a wall of a cave, unable to experience reality directly but only able to infer it based on watching shadows cast on the wall.” It is the journey out of the cave that represents our journey from ignorance toward knowledge of what’s most real.

What an irony – that an AI robot named Plato, who is supposed to be imparting knowledge to our youth, may instead only be shackling them to the walls of the classroom while they learn about the world through the dark, sloppy projections of generative AI.

A further irony is that just as neuroscientists have figured out that digital tools in school consistently undermine learning (again, who’d have thunk it?! ), there appears to be a push to “roll out ChatGPT-style AI” in schools. Niral Shah shares my belief that introducing generative AI into classrooms should be based on solid research evidence. The problem? The studies “just aren’t there yet … No one really knows how generative AI in K-12 classrooms will affect children’s learning and social development.”

So, while the scary phase of AI has already arrived, and big tech seeks to ram AI down our throats, we are literally feeling in the dark when it comes to AI in classrooms.

Cool plan.

Gary Marcus, Emeritus professor of psychology and neural science at N.Y.U, calls it our Jurassic Park moment. Like me, he’s not anti-AI, but points out that the technology is “unreliable, and potentially dangerous,” and that “executives at Meta and OpenAI are as enthusiastic about their tools as the proprietors of Jurassic Park were about theirs.”

Clearly, tech companies stand to make big money by entrenching the belief that teachers are incapable and replaceable. But they’re not, and we must proceed thoughtfully and cautiously, and not let the important role of teachers – nor the value of a connected, safe, and social classroom – get lost in the shadows.

Very thoughtful. So glad I never had to deal with this in my teaching career. Your generation are the pioneers.

LikeLike

Thanks for reading, Thomas! It really is a strange time in education – as dark as it is, it’s the humanity of the role that keeps on invested and connected. Hence we must protect those elements of schools at all costs.

LikeLike